Recently I have to research on how to deploy API to API management using powershell via AzureDevOps. If you are looking for how to (below), you’re in the right place.:

– Deploy API to API management using AzureDevOps

– Build a CI/CD pipeline for API Management

– Deploy API to API management using Powershell

– Deploy Open API/Swagger to Azure API Management using AzureDevOps

These are your options;

- Use APIM development resource kit

- AzureDevOps add-ons

- Powershell.

I find APIM development resource kit to be complicated and scary because it is deploying using ARM template (one wrong configuration and you can end up screwing the entire APIM). The Add-ons is limited, specially if you need to deploy a policy.

In this blog, I’m going to cover the Powershell part. Below is the full script on how to deploy using powershell.

#Connect to Azure using Az Module

Connect-AzAccount

#Return all subscription

Get-AzSubscription

Set-AzContext -Subscription "{subscription Name}"

$resourceGroupName = "{resource group Name}"

$apimServiceName = "{APIM instance name}"

$openapiSpecs ="{open API specs JSON file name}"

$apiGlobalPolicy = "{API Policy XML file name}"

$apiPath = "{API Path}"

$apiId = "{API Id}"

$apiName = "{ API Name}"

$apiVersion = "{ API Version}"

$apiProtocols = @('https')

$apiServiceUrl = "{ Backend URL }"

# Create the API Management context

$context = New-AzApiManagementContext -ResourceGroupName $resourceGroupName -ServiceName $apimServiceName

# Check if it's already existing, if not a new versionset needs to be created, else use the existing one

# Version Set is unique by API, if there's an API with 2 versions there will be 1 version set with 2 APIs

Write-Host "[VERSION SET] Performing lookup. "

$versionSetLookup = Get-AzApiManagementApiVersionSet -Context $context | Where-Object { $_.DisplayName -eq "$apiName" } | Sort-Object -Property ApiVersionSetId -Descending | Select-Object -first 1

if($versionSetLookup -eq $null)

{

Write-Host "[VERSION SET] Version set NOT FOUND for: $apiName, creating a new one. "

$versionSet = New-AzApiManagementApiVersionSet -Context $context -Name "$apiName" -Scheme Segment -Description "$apiName"

$versionSetId = $versionSet.Id

Write-Host "[VERSION SET] Created new version set, id: $versionSetId"

}

else

{

Write-Host "[VERSION SET] Version set FOUND for: $apiName, using existing one. "

$versionSetId = $versionSetLookup.ApiVersionSetId

Write-Host "[VERSION SET] Reusing existing versionset , id: $versionSetId"

}

# import api from OpenAPI Specs

Write-Host "[IMPORT] Importing OpenAPI: $openapiSpecs "

$api = Import-AzApiManagementApi -Context $context -SpecificationPath $openapiSpecs -SpecificationFormat OpenApi -Path $apiPath -ApiId "$apiId$apiVersion" -ApiVersion $apiVersion -ApiVersionSetId $versionSetId -ServiceUrl $apiServiceUrl -Protocol $apiProtocols

Write-Host "[IMPORT] Imported API: $api.ApiId "

# Apply Global Policy if existing

if (Test-Path $apiGlobalPolicy)

{

Write-Host "[POLICY] Global Policy found applying : $apiGlobalPolicy "

Set-AzApiManagementPolicy -Context $context -PolicyFilePath $apiGlobalPolicy -ApiId $api.ApiId

Write-Host "[POLICY] Global Policy applied. "

}

else

{

Write-Host "[POLICY] Global Policy NOT FOUND skipping : $apiGlobalPolicy "

}

Now how do we integrate this with AzureDevOps?

Here’s how it would look like:

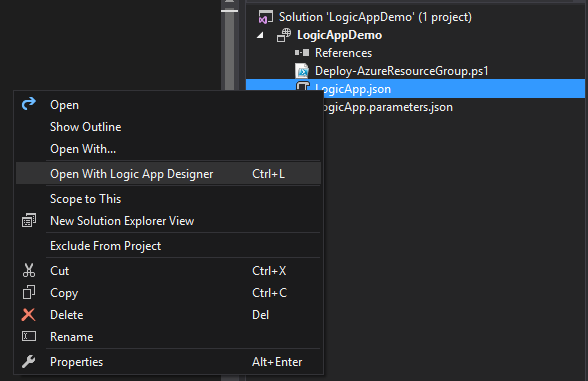

Build Pipeline Configuration

1. Create a Copy files task that copies the .json and .xml (policy) to the build artifact

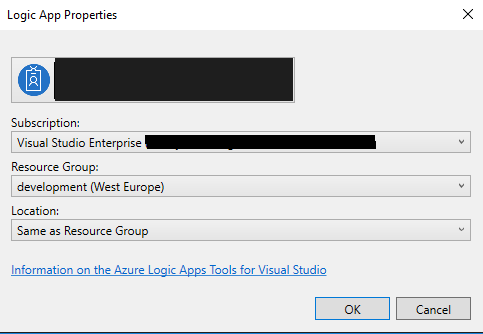

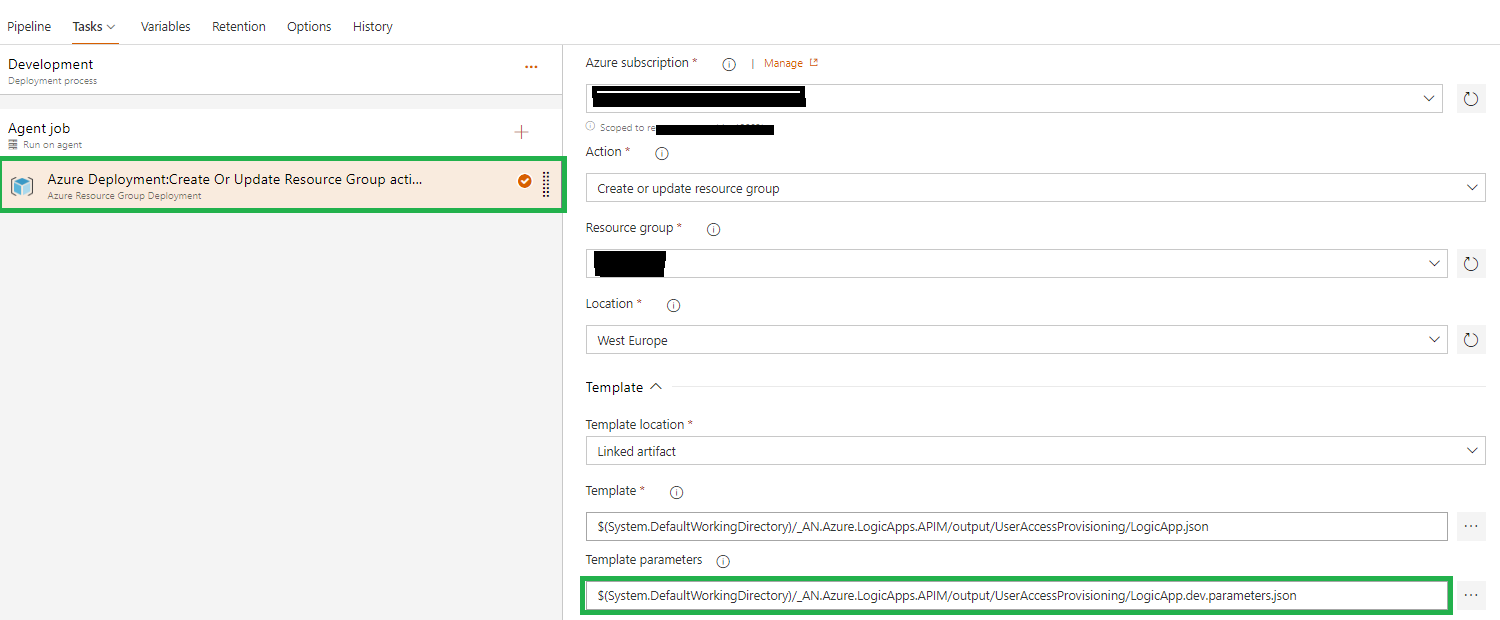

Release Pipeline Configuration

- Task Group -> APIM-Dev Deploy-Standard. I have to create 1 task group by environment since i don’t know how to parameterized the AzureSubscription.

Powershell Script with Variables:

$resourceGroupName = "{resource group Name}"

$apimServiceName = "{APIM instance name}"

$openapiSpecs ="$(System.ArtifactsDirectory)\$(Release.PrimaryArtifactSourceAlias)\drop\$(OpenAPI-Filename)"

$apiGlobalPolicy = "$(System.ArtifactsDirectory)\$(Release.PrimaryArtifactSourceAlias)\drop\$(GlobalPolicy-Filename)"

$p = "$(System.ArtifactsDirectory)\$(Release.PrimaryArtifactSourceAlias)\drop\"

$apiPath = "$(API-Path)"

$apiId = "$(API-Id)"

$apiVersion = "$(API-Version)"

$apiName = "$(API-Name)"

$apiProtocols = @('https')

$apiServiceUrl = "$(API-ServiceUrl)"

$OpId1 = "$(OperationPolicy-OpId1)";$OpId1Policy = "$(OperationPolicy-OpId1-PolicyFile)"

$OpId2 = "$(OperationPolicy-OpId2)";$OpId2Policy = "$(OperationPolicy-OpId2-PolicyFile)"

$OpId3 = "$(OperationPolicy-OpId3)";$OpId3Policy = "$(OperationPolicy-OpId3-PolicyFile)"

$OpId4 = "$(OperationPolicy-OpId4)";$OpId4Policy = "$(OperationPolicy-OpId4-PolicyFile)"

$OpId5 = "$(OperationPolicy-OpId5)";$OpId5Policy = "$(OperationPolicy-OpId5-PolicyFile)"

$OpId6 = "$(OperationPolicy-OpId6)";$OpId6Policy = "$(OperationPolicy-OpId6-PolicyFile)"

$OpId7 = "$(OperationPolicy-OpId7)";$OpId7Policy = "$(OperationPolicy-OpId7-PolicyFile)"

$OpId8 = "$(OperationPolicy-OpId8)";$OpId8Policy = "$(OperationPolicy-OpId8-PolicyFile)"

$OpId9 = "$(OperationPolicy-OpId9)";$OpId9Policy = "$(OperationPolicy-OpId9-PolicyFile)"

$policies =@()

$policies+= @{ "OpId"= $OpId1;"x"= $OpId1Policy; }

$policies+= @{ "OpId"= $OpId2;"x"= $OpId2Policy; }

$policies+= @{ "OpId"= $OpId3;"x"= $OpId3Policy; }

$policies+= @{ "OpId"= $OpId4;"x"= $OpId4Policy; }

$policies+= @{ "OpId"= $OpId5;"x"= $OpId5Policy; }

$policies+= @{ "OpId"= $OpId6;"x"= $OpId6Policy; }

$policies+= @{ "OpId"= $OpId7;"x"= $OpId7Policy; }

$policies+= @{ "OpId"= $OpId8;"x"= $OpId8Policy; }

$policies+= @{ "OpId"= $OpId9;"x"= $OpId9Policy; }

Write-Host "Initializing APIM Resource Group: $resourceGroupName, API ServiceName: $apimServiceName"

Write-Host "API Definition: $openapiSpecs, API Path: $apiPath, API Id: $apiId, API Version: $apiVersion "

# Create the API Management context

$context = New-AzApiManagementContext -ResourceGroupName $resourceGroupName -ServiceName $apimServiceName

# Check if it's already existing, if not a new versionset needs to be created, else use the existing one

# Version Set is unique by API, if there's an API with 2 versions there will be 1 version set with 2 APIs

Write-Host "[VERSION SET] Performing lookup. "

$versionSetLookup = Get-AzApiManagementApiVersionSet -Context $context | Where-Object { $_.DisplayName -eq "$apiName" } | Sort-Object -Property ApiVersionSetId -Descending | Select-Object -first 1

if($versionSetLookup -eq $null)

{

Write-Host "[VERSION SET] Version set NOT FOUND for: $apiName, creating a new one. "

$versionSet = New-AzApiManagementApiVersionSet -Context $context -Name "$apiName" -Scheme Segment -Description "$apiName"

$versionSetId = $versionSet.ApiVersionSetId

Write-Host "[VERSION SET] Created new version set, id: $versionSetId"

}

else

{

Write-Host "[VERSION SET] Version set FOUND for: $apiName, using existing one. "

$versionSetId = $versionSetLookup.ApiVersionSetId

Write-Host "[VERSION SET] Reusing existing versionset , id: $versionSetId"

}

# import api from OpenAPI Specs

if($openapiSpecs -like "*wadl*")

{

$format = "Wadl"

}

else {

$format = "OpenApi"

}

Write-Host "[IMPORT] Importing OpenAPI: $openapiSpecs, format: $format"

$api = Import-AzApiManagementApi -Context $context -SpecificationPath $openapiSpecs -SpecificationFormat $format -Path $apiPath -ApiId "$apiId$apiVersion" -ApiVersion $apiVersion -ApiVersionSetId $versionSetId -ServiceUrl $apiServiceUrl -Protocol $apiProtocols

Write-Host "[IMPORT] Imported API: " + $api.ApiId

# Apply Global Policy if existing

if (Test-Path $apiGlobalPolicy -PathType Leaf)

{

Write-Host "[POLICY] Global Policy found applying : $apiGlobalPolicy "

Set-AzApiManagementPolicy -Context $context -PolicyFilePath $apiGlobalPolicy -ApiId $api.ApiId -Format "application/vnd.ms-azure-apim.policy.raw+xml"

Write-Host "[POLICY] Global Policy applied. "

}

else

{

Write-Host "[POLICY] Global Policy NOT FOUND skipping : $apiGlobalPolicy "

}

$policyctr = 1

foreach($policy in $policies)

{

$policyFile = $p + $policy.x;

if($policyFile -ne "")

{

if (Test-Path $policyFile -PathType Leaf)

{ Write-Host "[POLICY] Applying : " + $policyFile "on OpId:" + $policy.OpId

Set-AzApiManagementPolicy -Context $context -PolicyFilePath $policyFile -ApiId $api.ApiId -OperationId $policy.OpId -Format "application/vnd.ms-azure-apim.policy.raw+xml"

}

else { Write-Host "[POLICY] Policy: $policyctr has no operation id or policy xml defined. Skipping" }

}

else {Write-Host "[POLICY] Policy: $policyctr is empty skipping." }

$policyctr++;

}

Write-Host "[POLICY] Applied Operation Policy "

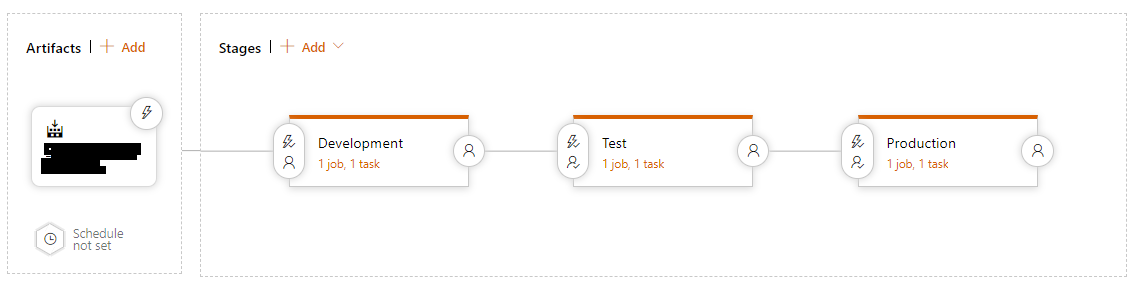

2. In the Release Pipeline, add the Task group and also parameterized it so you only need to change the variable of the release pipeline when cloning (very handy).

The Release pipeline variable, you only need to change this when cloning.

In action:

Like this:

Like Loading...